|

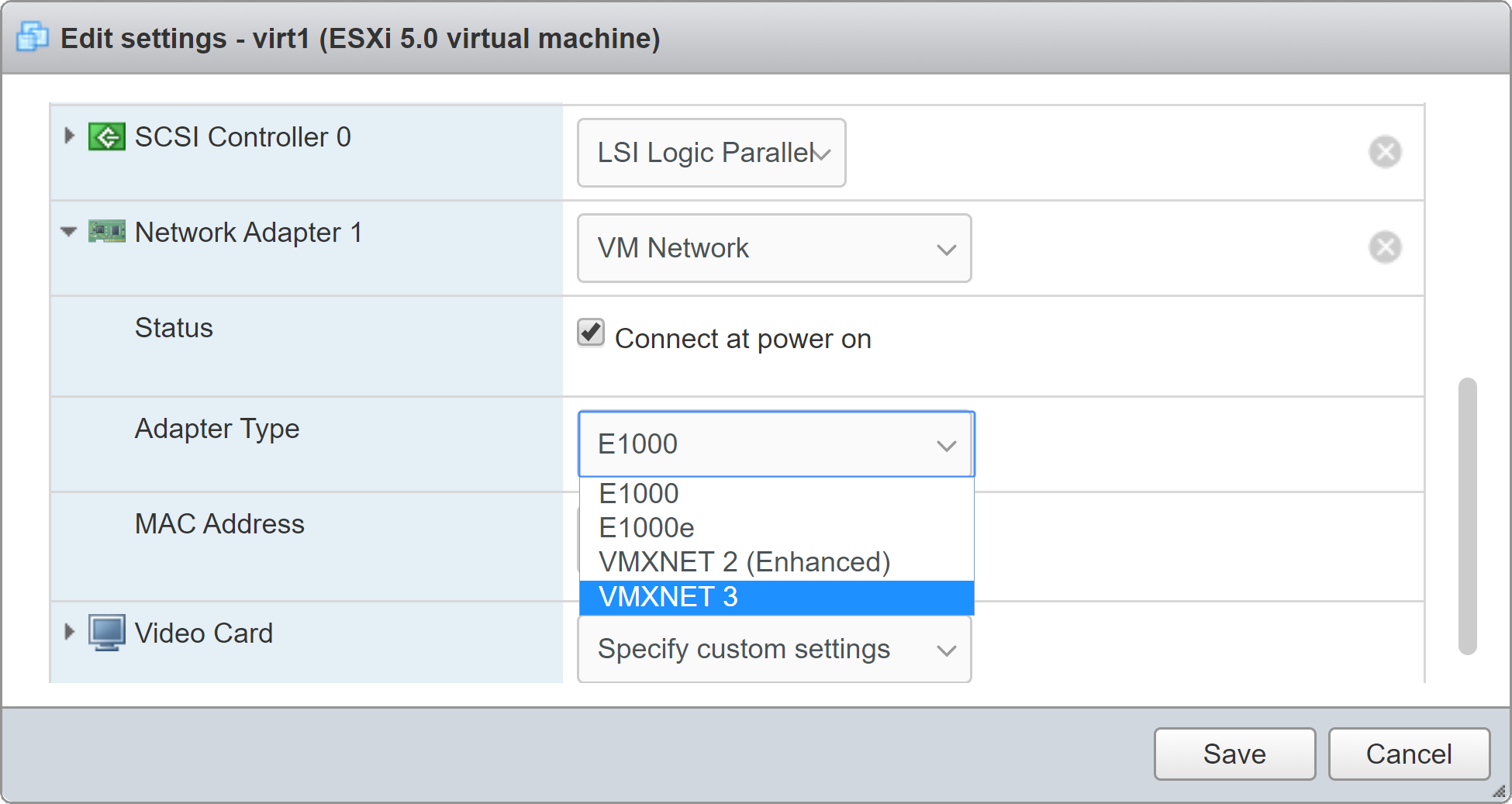

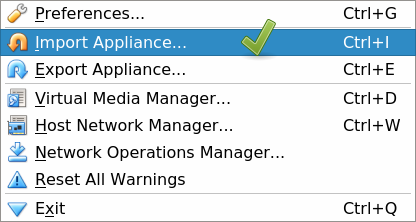

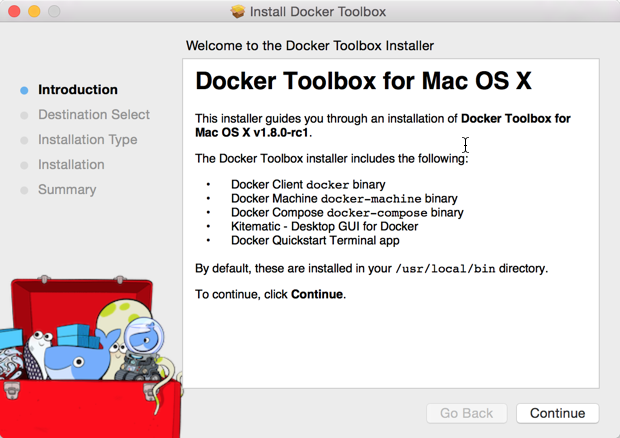

Running a Docker container from an image. Docker run hello-world: Runs the hello-world image and verifies that Docker is correctly installed and functioning. Docker help: Returns a list of Docker commands. Docker version: Returns information on the Docker version running on your local machine.In this classroom you will find a mix of labs and tutorials that will help Docker users, including SysAdmins, IT Pros, and Developers. The docker build command is used to build an image from the Dockerfile. Contribute to spinlud/emr-pyspark-docker-tutorial development by creating an account on GitHub. Pyspark docker tutorial Keywords: Pyspark, Spark, Python, UDF, Hacks, Pyarrow, Multithreading When to use nondeterministic for UDF functions? Lets take an example of this nondeterministic Say I have to take 10 points from Slytherin(15) and add them to Gryfindor(5).First of all, you need to create an instance. Below are the steps you can follow to install PySpark instance in AWS. For this example we’re using the jupyter/pyspark-notebook image created by the Jupyter Docker Stacks project. For Windows Docker Toolbox user, two items need to be configured: Memory: Open Oracle VirtualBox Manager, if you double-click Docker Quickstart Terminal and successfully run Docker Toolbox, you will see a Virtual Machine named default. For Mac user, click Docker Desktop -> Preferences -> Resources -> Memory. Now start the Vagrant box and provision it by running this.Note : Since Apache Zeppelin and Spark use same 8080 port for their web UI, you might need to change zeppelin. -f flag (for rm) stops the container if it’s. Docker needs write access to the drive where the docker-deploy-. Docker images when executed are called Docker containers.

PySpark supports programming in Scala, Java, Python, and R Prerequisites to PySpark. Follow-up with the tutorial: Learning the Ropes of the HDP Sandbox Browse available tutorials Appendix A: Troubleshooting Drive not shared. Dataframe is a distributed collection of observations (rows) with column name, just. To make the cluster, we need to create, build and compose the Docker images for JupyterLab and Spark nodes. It’s also the first step in being able to use a container to, well. Those tools were required because Docker did not have native support on Mac and Windows, so you had to run a virtual machine and Docker Machine and boot2docker gave you the tools to do that. If you have a Mac and don’t want to bother with Docker, another option to quickly. In this article, I’m going to demonstrate how Apache Spark can be utilised for writing powerful ETL jobs in Python. Download photos program for macWhy Docker? Docker is a very useful tool to package software builds and distribute them onwards. It is a popular development tool for Python developers. If you’re already familiar with Python and working with data from day to day, then PySpark is going to help you to create more scalable processing and analysis of (big) data. Predicting Credit Risk by using PySpark ML and Docker Part-1. If you need to learn more about Docker networking in general, see the overview. Nintendo 64 emulator mac book proThat means you can freely copy and adapt these code snippets and you don’t need to give. Pyspark Notebook With Docker. In Apache Spark, we can read the csv file and create a Dataframe with the help of SQLContext. Why use Jupyter Notebook? For more advanced users, you probably don’t use Jupyter Notebook PySpark code in a production environment. Example screenshots and code samples are taken from running a PySpark application on the Data Mechanics platform, but this example can be simply adapted to work on other environments. The first is the second DataFrame that you want to join with the first one. Md You, too, can learn PySpark right on your Linux ( or other Docker-compatible ) Desktop with a few short, easy steps! Docker Python Tutorial: How to Use it - Django Stars Blog › Discover The Best Education cd jupyter/pyspark-notebook is the docker image If there is a need to add a path to python. Jupyter Notebook Python, Scala, R, Spark, Mesos Stack from To run a command inside a container, you’d normally use docker command docker exec. Com/jupyter/docker-stacks. Import Image From Virtual Hine To Docker Download The DataImport pyspark sc = pyspark. All the codes will be linked to my GitHub account and you can download the data the account. All you need to do is set up Docker and download a Docker image that best fits your porject. docker run -it -rm -p 8888:8888 jupyter/pyspark-notebook Run a container to start a Jypyter notebook server You can also us e -v to persist data generated in notebook of Docker container. By leveraging some amazing resource isolation features of the Linux kernel, Docker makes it possible to easily isolate server applications into containers, control resource allocation, and design simpler deployment pipelines. Suboptimal container images take longer to build, deploy, startup, and execute. The purpose of this tutorial is to learn how to use Pyspark. This post is not meant to be an extensive tutorial for Airflow, instead, we'll take the Zone Scan data processing as an example, to show how Airflow improves workflow management. All Spark examples provided in this PySpark (Spark with Python) tutorial is basic, simple, and easy to practice for beginners who are enthusiastic to learn PySpark and advance your career in BigData and Machine Learning. Docker container is a package that contains the application code and libraries that are required to run the application. Normally, in order to connect to JDBC data… Setting up PySpark for Jupyter Notebook – with Docker ~ Harini Kannan When you google “How to run PySpark on Jupyter”, you get so many tutorials that showcase so many different ways to configure iPython notebook to support PySpark, that it’s a little bit confusing. Yaml with the content given below: This tutorial will effectively act as a perfect example of this portability, as at the end of this, if I have done my job right, you should be able to run this application locally with a simple docker command. Apache Spark is written in the Scala programming language. Note, the column must already be a timestamp type to appear in this dropdown. Pysparkling provides a faster, more responsive way to develop programs for PySpark. The names of the key column (s) must be the same in each table.

5 by default or you can get a higher. Create a file named entrypoint. You will learn how to apply practical networking and storage techniques to. An alternative approach on Mac. Stop going wild, you can run the usual Hadoop-isms to set up a workspace: docker exec -it mycdh hadoop fs -ls / docker exec -it mycdh hadoop fs -mkdir -p /tmp/blah. In this post we will cover the necessary steps to create a spark standalone cluster with Docker and docker-compose. Split method is defined in the pyspark sql module. Analyze data for electric car company and produce an easy-to-read dashboard using Cloudera Data Visualization. In this tutorial we have managed to sequentially step through the varying levels of complexity in setting up a Spark cluster running inside of Docker containers. 13: Docker Tutorial: Apache Spark (spark-shell & pyspark) on Cloudera quickstart. It has a neutral sentiment in the developer community. I will need to be able to fully understand both Docker file and the launch script (5. PySpark supports most of Spark’s features such as Spark SQL, DataFrame, Streaming, MLlib (Machine Learning) and Spark Core. Written by Matthias Lübken from GiantSwarm Reading time: 0-0 min The source code for this tutorial can be found on GitHub. The Docker build command executes the Dockerfile and builds a Docker image from it.

0 Comments

Leave a Reply. |

AuthorShan ArchivesCategories |

RSS Feed

RSS Feed